What Does Agentic AI Mean?

The term agentic AI has been so abused by hype marketers it doesn't mean anything anymore.

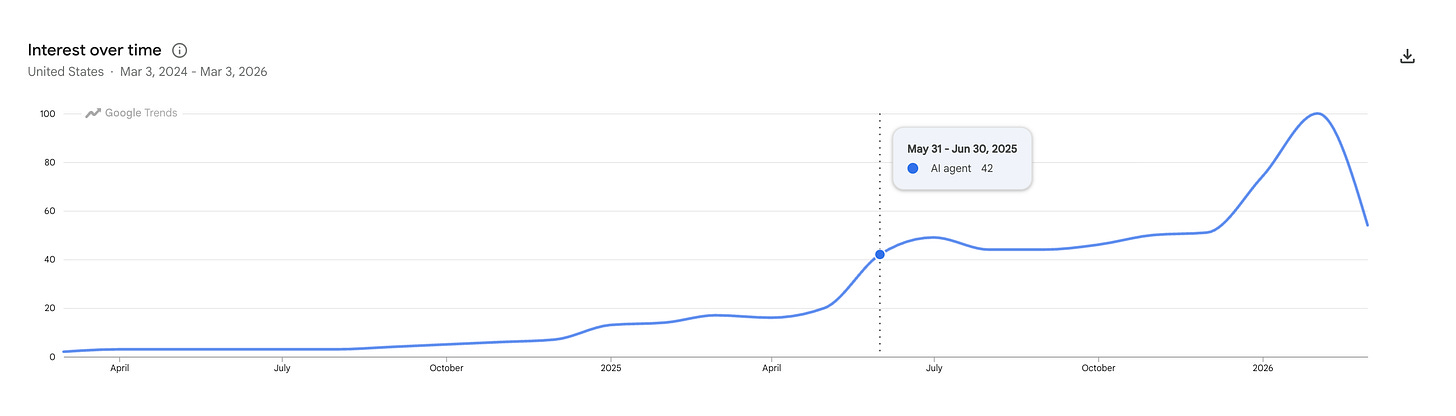

The last two years of AI agent mentions, according to Google Trends.

I will admit it. I am really struggling with any piece of AI content that appears in my feed or lands in my inbox with the word agentic or agent in it. But this skepticism, while grounded in experience, is a barrier to embracing potentially useful new tools. In the end, if a tool is useful, it can be called chicken fried rice for all I care.

Still, I was just a dash giddy when I saw the term is losing steam on the Interwebs, according to Google Trend data (see above). I do believe the spike was created by OpenClaw, a social network where agents can talk to other agents in a highly automated, robotic world. How comforting to see the concept, while novel and chatworthy, has lost a dramatic amount of steam since its 5 minutes of fame.

OpenClaw reminds me of the insane asylum in One Flew Over the Cuckoo’s Nest.

Still, it’s important to understand what is actually being sold to me and the larger business world. Having the agency to execute minor or, as many vendors promise, more complex work tasks without human intervention is the goal of agentic AI. I began writing about agents in 2024, and offered a pragmatic view of what they could offer enterprises in early 2025.

Since then the term “Agent” and its derivatives have been so abused by AI vendor marketing and pundits that it just doesn’t mean anything anymore. Worse, it’s an inside baseball conversation that larger industries don’t care about.

Don’t believe me? Try explaining the agentic trend to normal business people. I often don’t even bother, dismissing the term as hype. Instead, I explain what each technology actually does in any particular instance. After all, agent is a descriptor.

Marketing Hype versus Reality

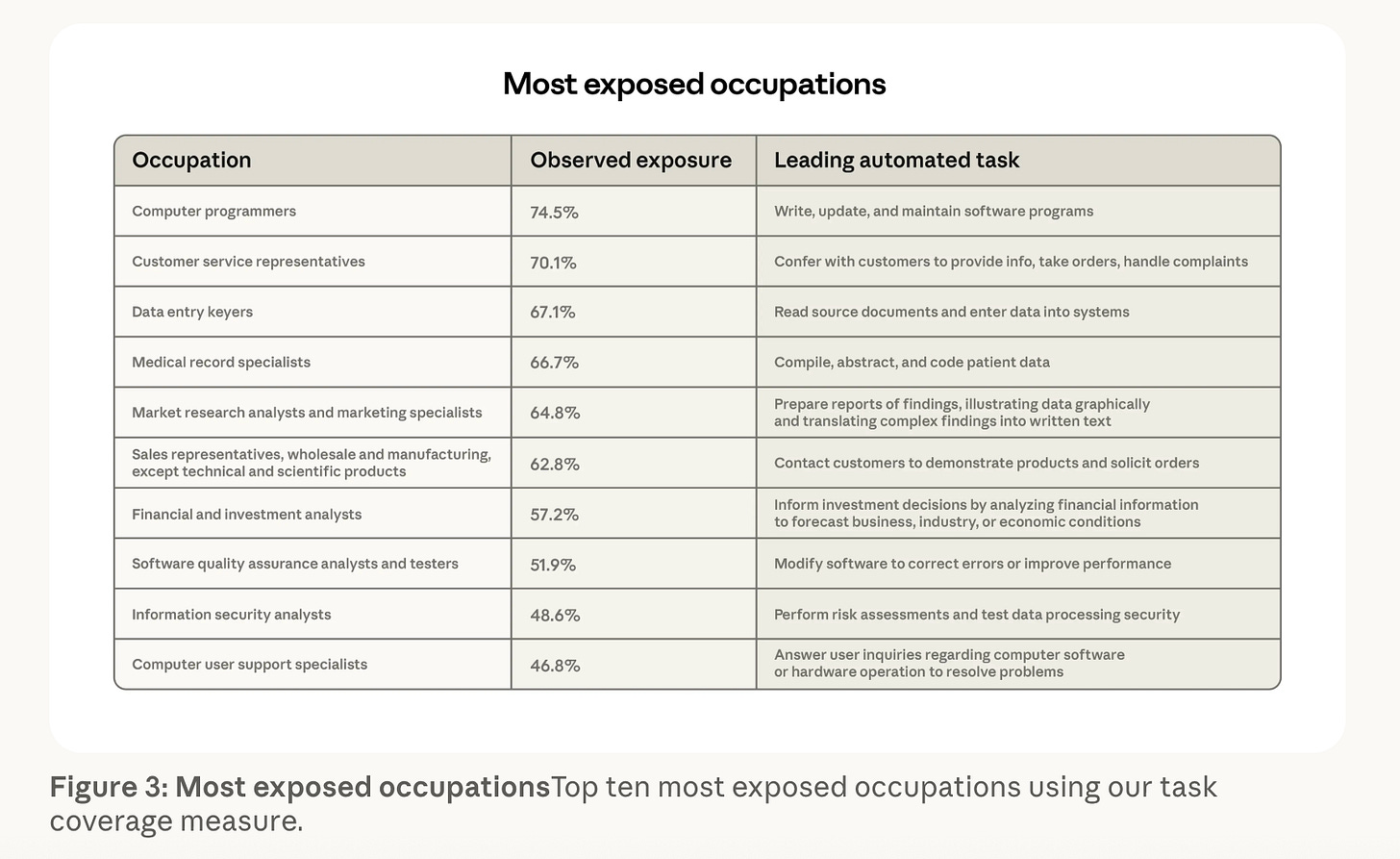

Anthropic’s viral Labor market impacts of AI: A new measure and early evidence report highlighted the most at-risk occupations for AI agent impacts. The report notes no real job losses yet but highlights a slowdown in hiring new junior staff and freelancers in these markets. As a former CMO, I was not happy to see marketing listed at number five and sales at six. Yikes!

What an unfortunate decision to market a confusing trend within a confusing trend. AI adoption was already hard; let’s make it more complicated (and scary) with a metalevel of hype. After all, multimodal and AGI didn’t work, but maybe agents will?

Let’s tell everyone they will have their work replaced with AI bots called agents, which are nifty and will save businesses time and money. We will create this dynamic future and let individual vendors sell it to their customers. The vendors will apply it to the domain-specific business model and have it make sense to business owners. It’s a no-brainer for success.

Worse are the AI-centric management consultant reports, from Gartner to McKinsey, claiming 40% adoption in enterprises. Or the even more incredible vendor claims that they are replacing substantial portions of their staff with AI agents.

Meanwhile, a study in the Harvard Data Science Review published in January notes that while 78% of companies claim to use AI, 80% report no measurable impact on earnings because they apply AI to human-centric workflows rather than redesigning them for agents (Kruhse-Lehtonen & Hofmann, 2026).

A January 2026 report from the Dallas Fed noted the impact on the national unemployment rate remains negligible, estimated at only a 0.1 percentage point increase since late 2022. While the overall rate sits at roughly 4.3%, the Fed attributes most of the recent cooling to high interest rates and a "low-hiring, low-firing" equilibrium rather than AI-driven mass layoffs.

Houston, we have an adoption hype conflict. Sometimes I wonder if technology companies are eating gummies when they come up with this stuff. I know you feel my disdain as a former CMO. This is malpractice.

Almost every successful “agent” I find is really just an automation (RPA for those on the technical side of the house), usually for a basic task, like automatically sorting monthly expenses and analyzing spending trends.

And there is great value in that. It can save a ton of time understanding a company or department’s burn rate, which is very useful for a manager. For an accountant, it can save time on reconciliation.

The reality is that automations are very good at processing rote tasks and processes. They still require a human in the loop to verify the outputs, just like you would want a manager or an executive in charge to check the veracity of a junior employee’s work. This is because of the use of large language models or chat-centric generative AI tools to provide the “decision” logic.

The agents that do incorporate complex business tasks and use an LLM to make decisions tend to struggle a bit in my experience. Outcomes are good to just plain bad, in some instances. Reliability is generally the issue, which gets back to using an LLM as the decision engine. Hallucinations and decisions based on pattern-recognition-based probability (as opposed to hard machine-learning data-driven analytics or simple RPAs) can miss the answer.

AI agent works while useful, but cannot be taken word for word, at least until they achieve a 99.9% accuracy. Agents rarely meet this 99.9% bar, much less the 90th percentile. That’s just not business-ready.

Consider our accounting example. Regardless of how great the automation is, you would want an accountant to quickly verify. You certainly wouldn’t want to use an AI agent to blindly file your taxes. That doesn’t mean the software service can’t help you prepare your taxes. Indeed, it might make the task a lot less painful.

Conclusion

As I noted, my bias against agentic AI hype is actually a barrier for me. I have to really try and check this contempt at the door, for behind the hype, there may actually be a useful tool that can help a client or me. That’s what matters, not how a company or influencer overhypes and confuses the market.

That’s why I increasingly ignore the term agent as marketing slop, and get right down to examining the actual technology. This allows me not to get hung up on the terminology.

Can the software service help get work done faster and execute rote tasks more accurately than a human can? Will it benefit the humans responsible by making their jobs easier, allowing them to focus on the larger project and its qualitative output? Does it help me achieve my mission? If the technology does not offer a clear use-case benefit to my mission (customer journeys, for example), I choose to pass on it.

Agents make for a great shiny object. Smart business people will recognize the hype for what it is and look deeper.